Learning To Program - A Beginners Guide - Part Three - How Does a Computer Work?

How does a computer work? This is quite an interesting problem. It is rather similar to the question "How long is Britain's coastline?" - it depends how closely you look (1).

How does a computer work (I) - the end user perspective

As an end user, I might say "I make sure it has got some power, and then press the on switch. It shows me the manufacturer's logo for a few seconds, then asks me for a password or pin. After that, I see a menu that lets me choose one of the programs I have available. When I choose one, it opens up on the screen, and I can click controls with a mouse, or my finger, and type on my keyboard (or perhaps talk to it) to control what it does. The display updates, and sometimes it plays a sound, or vibrates, depending on what I've asked it to do. Sometimes, I save my document or picture or whatever, which I can see is stored somewhere on the computer, or in a cloud service like DropBox or SkyDrive. Or I can share it with someone using email or Skype."

Another end-user of a computer might say "I open the door, and put my washing inside. I twiddle a dial to tell it what temperature to use; it weighs the washing to work out how big the load is for me (fancy!) and then it runs through a series of washing, rinsing and drying cycles depending on what I chose. It beeps at me every few minutes when it is done, to remind me to open the door and hang it out."

They are both perfectly good end-user descriptions of how a computer works. It's roughly equivalent to zooming out a map of Britain, drawing round the coast, and using that to measure the coastline.

Here's one I drew myself.

Not a bad approximation, and extremely useful at that very high level (even if I seem to have missed out some of the west coast of Scotland, and Wales has grown a bit of a belly). You could use a map at this scale to compare the relative length of coastline of various different countries, for example; but it is certainly not the only view of the world.

How does a computer work (II) - electrons are involved

Right down at the other end of the spectrum, we might ask someone who designs the electronic components that make up a computer - the processors, graphics cards and memory. They might talk about transistors, the fundamental building-blocks of digital computers. The transistor (in this context) acts as a switch - it can be 'on' or 'off'. By assembling those transistors in particular ways, we can build 'logic gates' - the decision making bits of a computer processor that will cause one switch to be 'off' if another two are 'on', or 'on' if either of them are 'off' for example (we call that a NAND gate - more on that sort of thing later). If pressed further, they might talk about how processors are built from thousands or millions of these transistors, each of which is etched onto layers of semiconductor, metals and insulating materials, and how the shape and choice of materials influences the way the electrons make their way through these microscopic devices to produce the on/off states.

This view of the world is also very useful, and extremely important. Using our coastline analogy, it is a bit like going down to a beach with a tape measure, and measuring round every rock pool and every land slip: really important if you want to understand the detail of the changing coastal landscape and ecosystem.

Neither of these views are all that useful if you just want to drive from Brighton to Portsmouth, though.

How does a computer work (III) - the programmer's perspective

As a programmer, we sit somewhere between those two perspectives. Our goal is to try to translate what the end user wants (that very high level, interactive 'real world' view), into the world of the electrons, by writing "software". At first glance, that seems quite daunting - and it can be - but fortunately, lots of very smart people have been working on this problem for a good few decades now, and we don't have to leap straight from one to the other in a single bound.

Instead, we think in terms of layers of detail or complexity. We hide "deeper" or more complicated understandings of the way the computer works with a layer of approximation or abstraction that is easier to deal with, and better suited to the particular problem we are trying to solve.

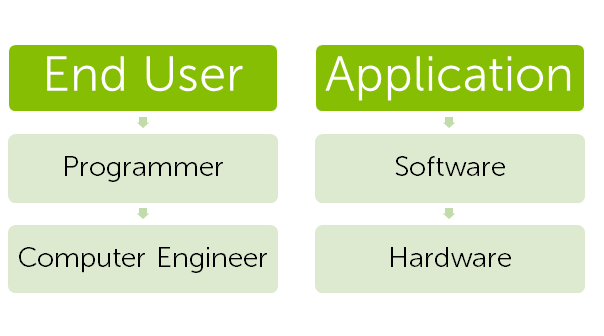

We could redraw the three perspectives we've just seen as a very simple layered diagram.

At the top of the stack (because we draw these layered diagrams as a pile of boxes, one on top the other, we often call it a stack), we have the end-user's "application"-centric view of the world. The programmer supports this, with their "software" view of the world and that, in turn, is built on a "hardware" view of the world. We're going to see this layering model again and again as we learn to program, so it is a good idea to get used to thinking that way.

I'm a programmer - why do I care what goes on in the hardware layer?

When you draw it out like that, you can see that there is an obvious relationship between each layer: the way they are stacked on top of each other. The software that the programmer has to build to meet the needs of the end user is, in part, determined by the way the computer engineer designed the hardware. Conversely, the hardware design has to consider the needs of the programmer in order to be able to realise the goals of the end user of the system.

And, of course, many programmers spend their whole careers right down near the hardware level. If you are a software engineer working with people who build devices, you might have to write "drivers" which provide the interface between the physical hardware and the rest of the software stack. You might find yourself investigating software problems using oscilloscopes and logic analysers! Others (the majority) are miles up the stack at a level of abstraction that means they rarely even really think about the hardware they are running on. (Although perhaps they ought to more often than they do!)

The best programmers usually have a good understanding of several different abstractions, and can see how they represent different views on the same system, choosing the best one for the job.

With that in mind, we're going to start out somewhere near the bottom of the stack by taking a look at one representation of the hardware box - from a programmer's perspective.

(1) We've got Roger Penrose to thank for this analogy.